Smoother Streaming with BBR

We flipped one server flag and got more download bandwidth for Spotify users. That is the TL;DR of this A/B experiment with BBR, a new TCP option.

Background

BBR is a TCP congestion control algorithm developed by Google. It aims to make Internet data transfers faster, which is no small feat!

How Spotify Streams Music

The basic principle behind Spotify streaming is simple. We store each encoded music track as a file, copied on HTTP servers across the world. When a user plays a song, the Spotify app will fetch the file in chunks from a nearby server with HTTP GET range requests. A typical chunk size is 512kB.

We like our audio playback instant, and silky smooth. To keep it that way we track two primary metrics:

Playback latency, the time from click to sound.

Stutter, the number of skips/pauses during playback.

Stutter happens mostly due to audio buffer underruns when download bandwidth is low. So our metrics map closely to connection time and transfer bandwidth. Classic stuff.

Now, how could BBR improve our streaming?

TCP Congestion What?

Let’s look at a file transfer from server to client. The server sends data in TCP packets. The client confirms delivery by returning ACKs. The connection has a limited capacity depending on hardware and network conditions. If the server sends too many packets too quickly, they will simply be dropped along the way. The server registers this as missing ACKs. The role of a congestion control algorithm is to look at the flow of send+ACKs and decide on a send rate.

Many popular options, like CUBIC, focus on packet loss. As long as there is no packet loss, they increase send rate, and when packets start disappearing, they back off. One problem with this approach is a tendency to overreact to small amounts of random packet loss, interpreting it as a sign of congestion.

BBR, on the other hand, looks at round trip time and arrival rate of packets to build an internal model of the connection capacity. Once it has measured the current bandwidth, it keeps the send rate at that level even if there is some noise in the form of packet loss.

There is more to BBR than this, but the throughput improvements are what interest us here.

The Experiment

Many internet protocol changes require coordinated updates to clients and servers (looking at you, IPv6!). BBR is different, it only needs to be enabled on the sender’s side. It can even be enabled after the socket is already opened!

For this experiment, we set up a random subset of users to include “bbr” in the audio request hostname as a flag, and add a couple of lines to the server config:

if (req.http.x-original-host == "audio-fa-bbr.spotify.com" && client.requests == 1) {

set client.socket.congestion_algorithm = "bbr";

}The other requests get served using the default, CUBIC.

We now have treatment and control groups for our A/B test. For each group we measure:

Playback latency (median, p90, p99)

Stutter (average count per song)

Bandwidth, average over the song download (median, p10, p01)

Results

Taking daily averages, stutter decreased 6-10% for the BBR group. Bandwidth increased by 10-15% for the slower download cohorts, and by 5-7% for the median. There was no difference in latency between groups.

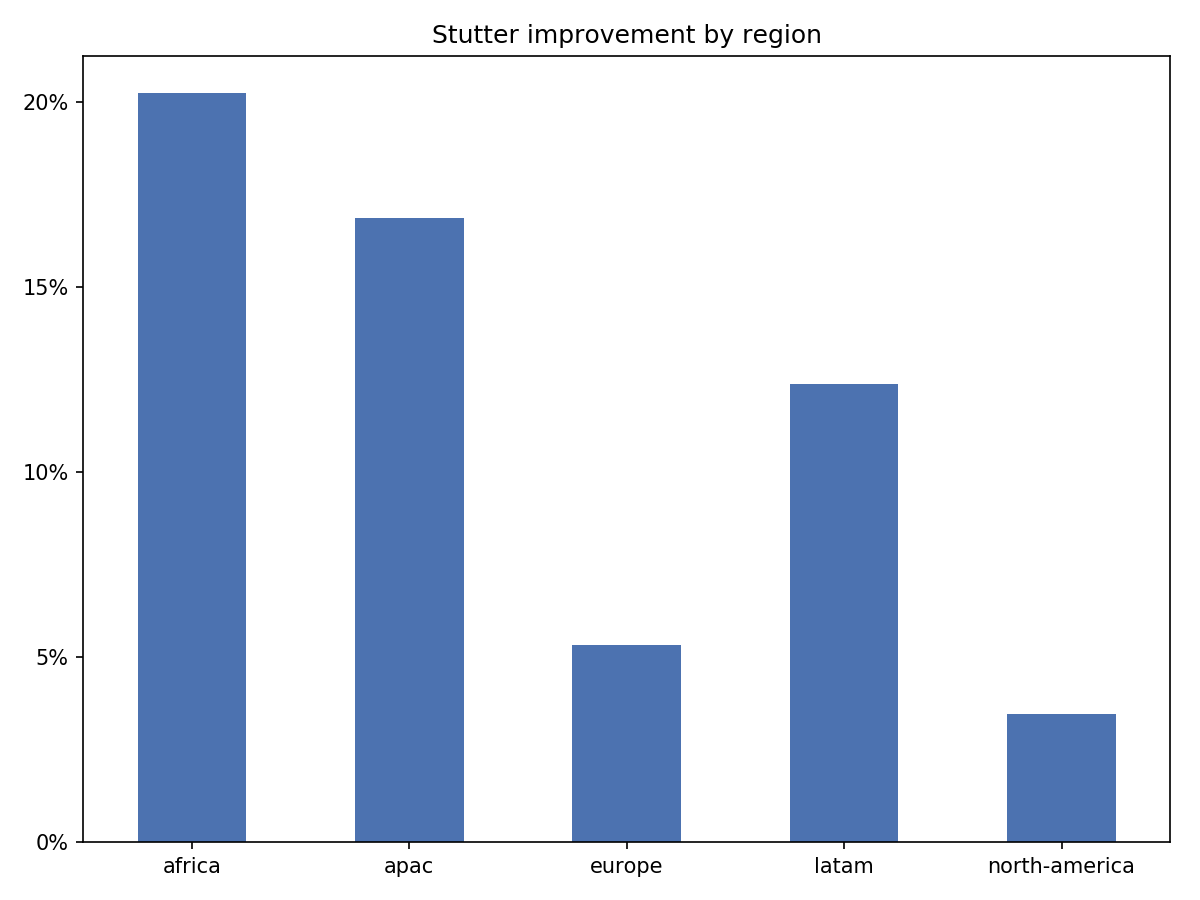

Significant Variation Across Geo Regions

We saw most of the improvements in Asia-Pacific and Latin America, with 17% and 12% decreases in stutter, respectively. Bandwidth increased 15-25% for slower downloads, and around 10% for the median.

By comparison, Europe and North America had 3-5% improvement in stutter, and around 5% in bandwidth.

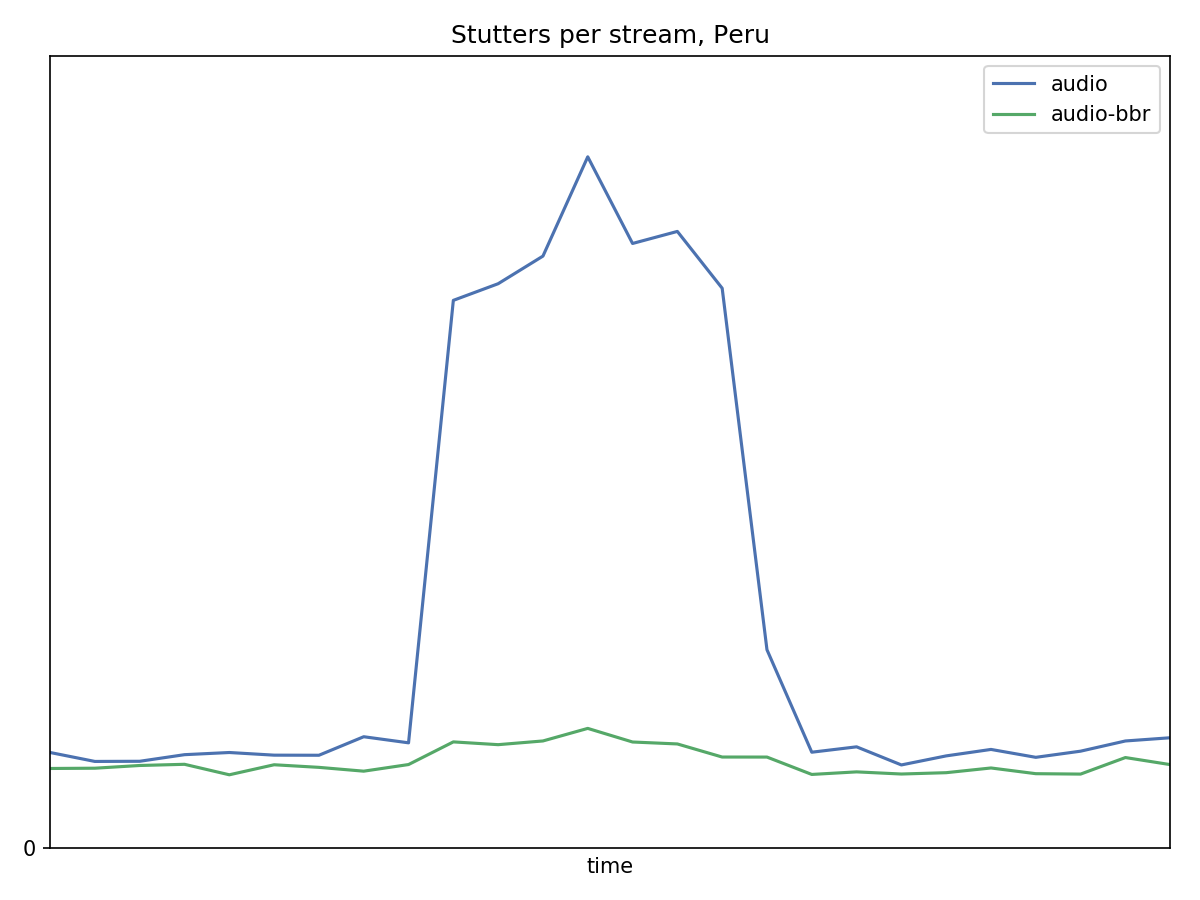

Bonus: Upstream Congestion Incident

During our experiment, we experienced a network congestion event with a South American upstream provider. This is where BBR really got to shine!

In Peru, the non-BBR group saw a 400-500% increase in stutter. In the BBR group, stutter only increased 30-50%.

In this scenario, the BBR group had 4x bandwidth for slower downloads (the 10th percentile), 2x higher median bandwidth, and 5x less stutter!

This is the difference between our users barely noticing, vs. getting playback issues bad enough to contact Customer Support.

Discussion

Our results are in line with reports from GCP, YouTube and Dropbox traffic. Performance with increased packet loss is also in line with results from earlier Google experiments.

There have been experiments showing that BBR may crowd out CUBIC traffic, among other problems. In the scope of our own traffic, we have not seen any indications of that so far. For example, we use a few different CDN partners for audio delivery, but we only ran the BBR experiment on one. The non-BBR group does not show any noticeable performance decrease vs other CDNs. We will continue to follow this closely.

We are very pleased with BBR’s performance so far. Moving our playback quality metrics in the right direction is fiendishly difficult, and normally involves trade-offs, e.g. stutter versus audio bitrate. With BBR, we have seen a substantial improvement at no apparent cost.