How We Improved the Development Experience for our Client Developers

TL;DR The core motivation for Spotify’s Client Platform (CliP) team is empowering and unblocking client developers and giving teams the tools they need to ensure a happy and satisfying developer experience (DX). In line with this, we wanted to improve the coding experience for our development teams through infrastructure changes. We conducted research among 318 engineers and learned that:

Developer productivity and satisfaction were compromised due to longer build times, as per our Engineering Satisfaction survey results.

We set out to improve build times through multiple changes, one of which was testing out different hardware for our build systems.

Our analyses found using Apple® silicon machines to be empirically faster and financially beneficial.

Build times on Apple silicon machines were 43% faster than Intel-based Mac® systems, overall, and up to 50% faster for Android builds and 40% faster for iOS builds.

We worked with Tech Procurement to ensure an early hardware upgrade for client developers, outside of the normal upgrade cycle, for a better client developer experience.

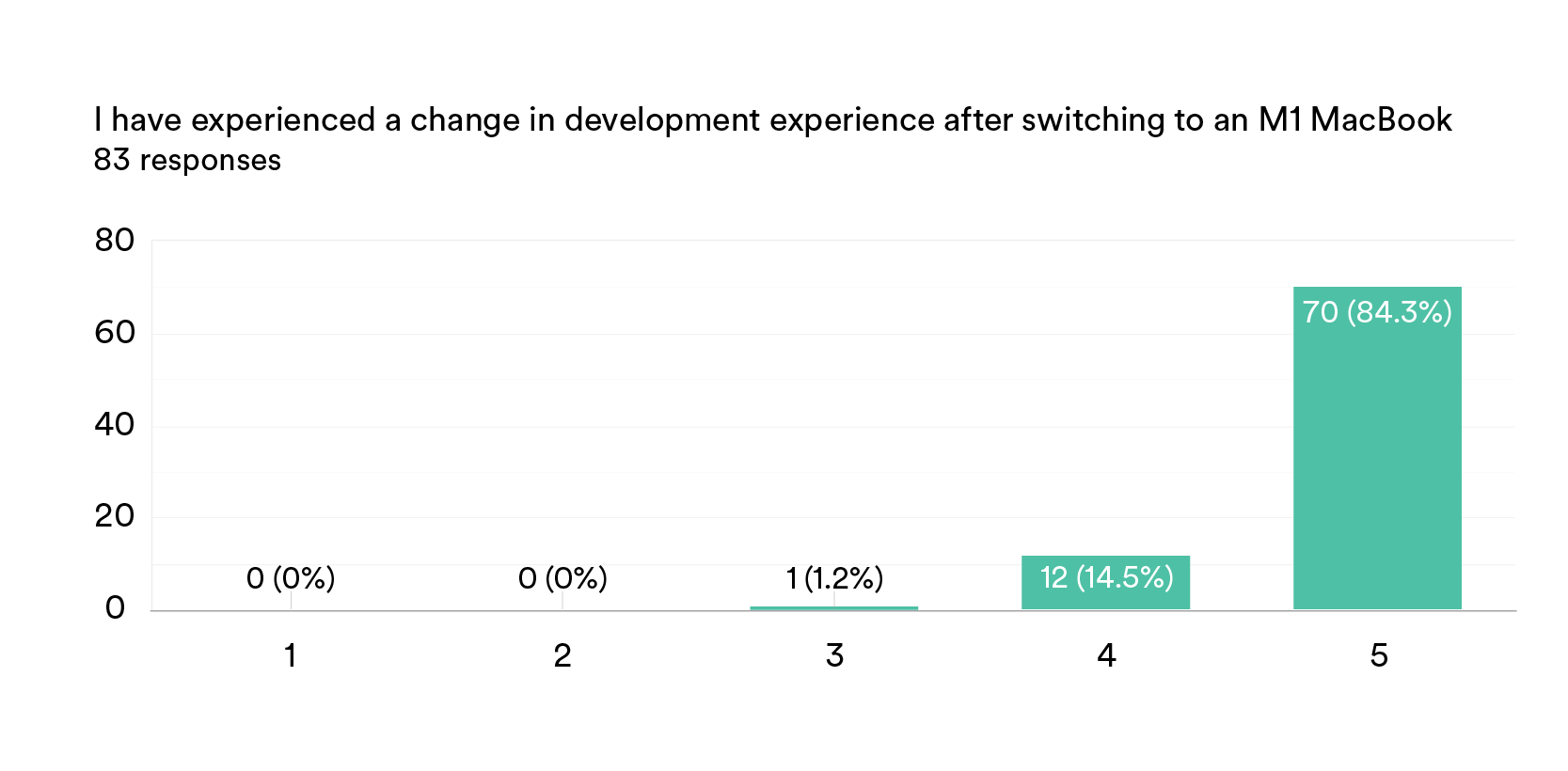

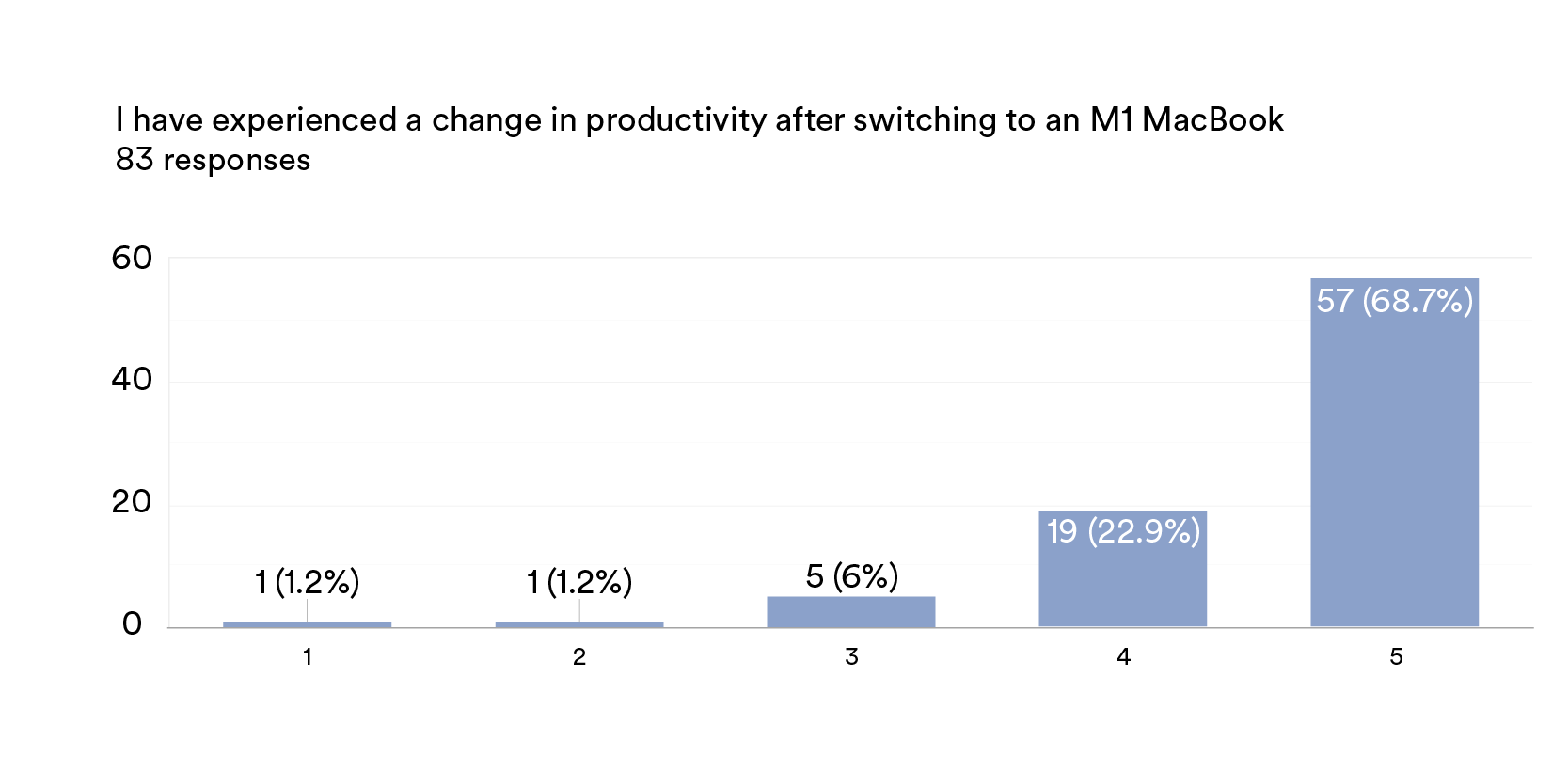

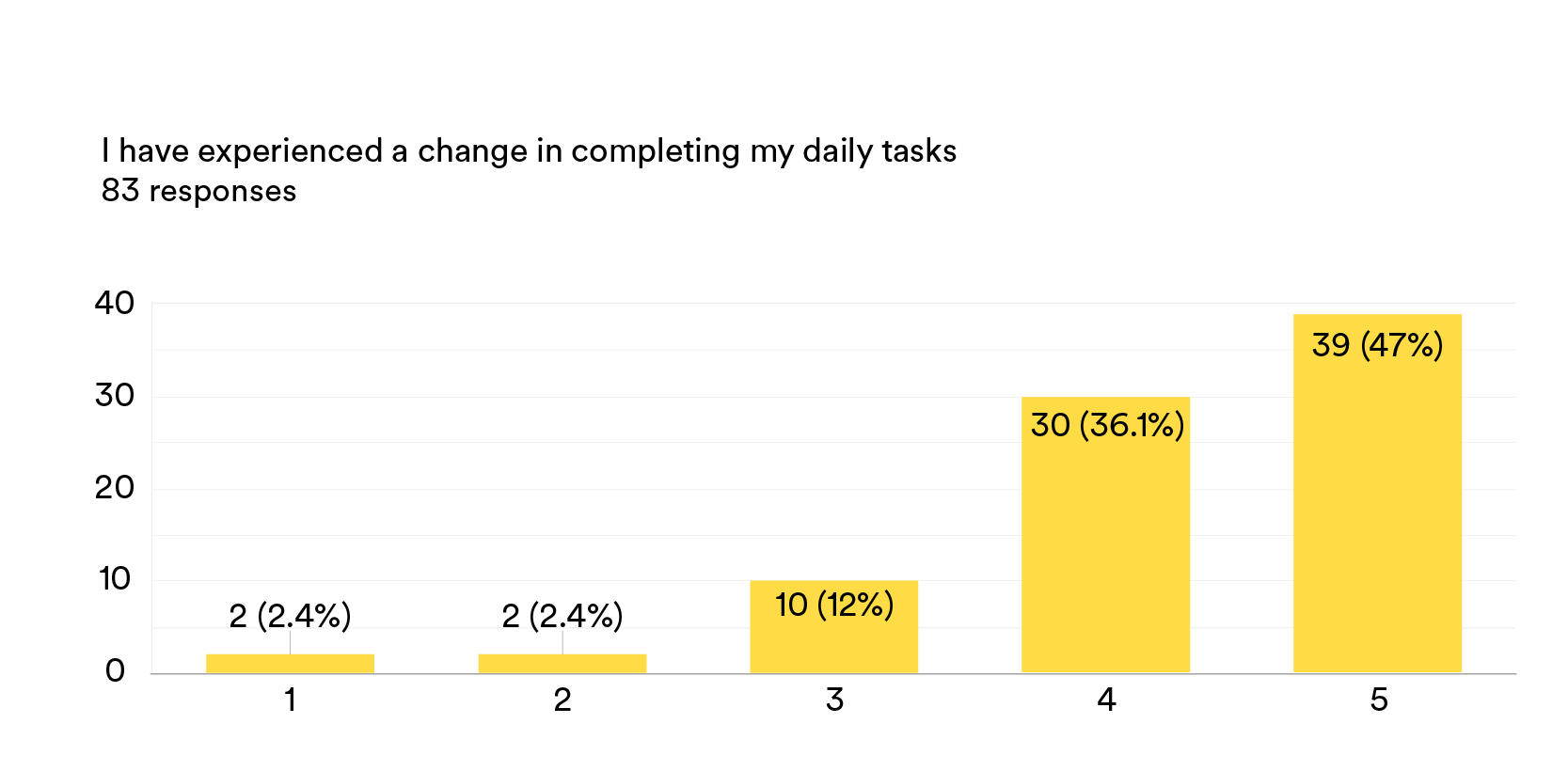

We sent out a feedback survey to the developers who upgraded their laptops. Our survey showed that >90% of surveyed team members gave a rating of 5 (out of 5) for productivity perception and development experience.

What motivated us to perform this research?

At Spotify, we conduct quarterly Engineering Satisfaction surveys to identify obstacles that diminish developers’ overall satisfaction and productivity. One such obstacle was longer build times. During early testing performed last year, we ran the same builds on different machines and found that switching to Apple silicon for development could reduce iOS build times by 20% to 30% (and up to 50% with the new M1 Pro and M1 Max chips). Therefore, in 2022, we prioritised creating a great developer experience through improvements to our developers’ machine architecture.

What is the problem we are trying to solve?

We were motivated to identify ways to improve developer satisfaction through architectural improvements. A wonderful blog post by a Reddit staff engineer inspired us not to stop at just system performance, but also to validate a financial use case for this move.

Our problem statement can be summarised in two points:

Do we have machines good enough* to be used by Spotify developers?

Will the performance improvements justify the monetary investment required?

*What is “good enough”? For the purpose of our analysis, we define “good enough” as a statistically significant performance improvement in Android/iOS local build times.

When do we know we succeeded?

Given the processing capabilities of M1s, we hypothesised that M1 machine local build times would improve significantly.

AvgLocalBuildTime(M1) < AvgLocalBuildTime(non-M1)

Empirical findings

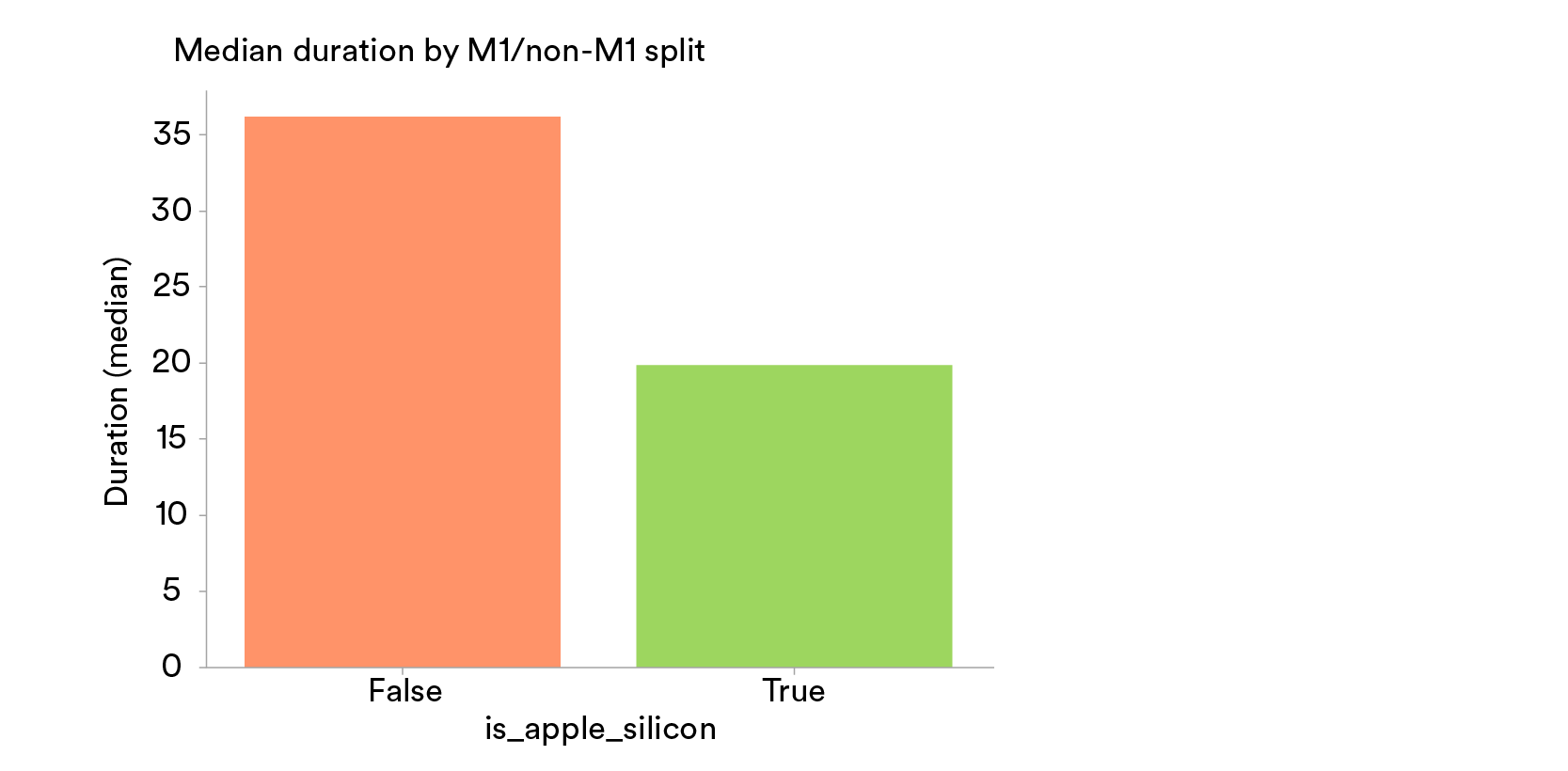

Overall: Apple silicon isabout 43% faster.

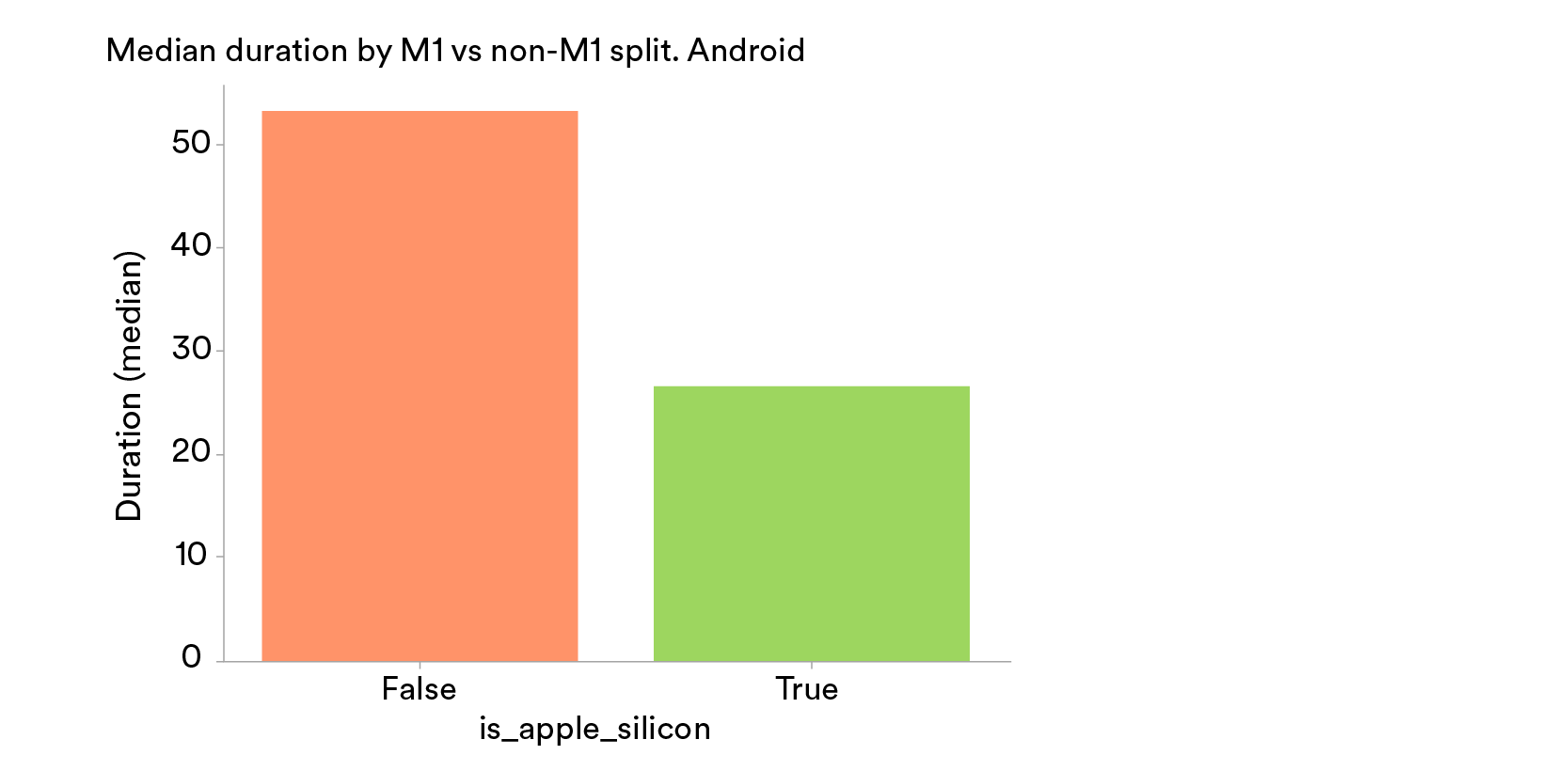

Android: Apple silicon is about 50% faster.

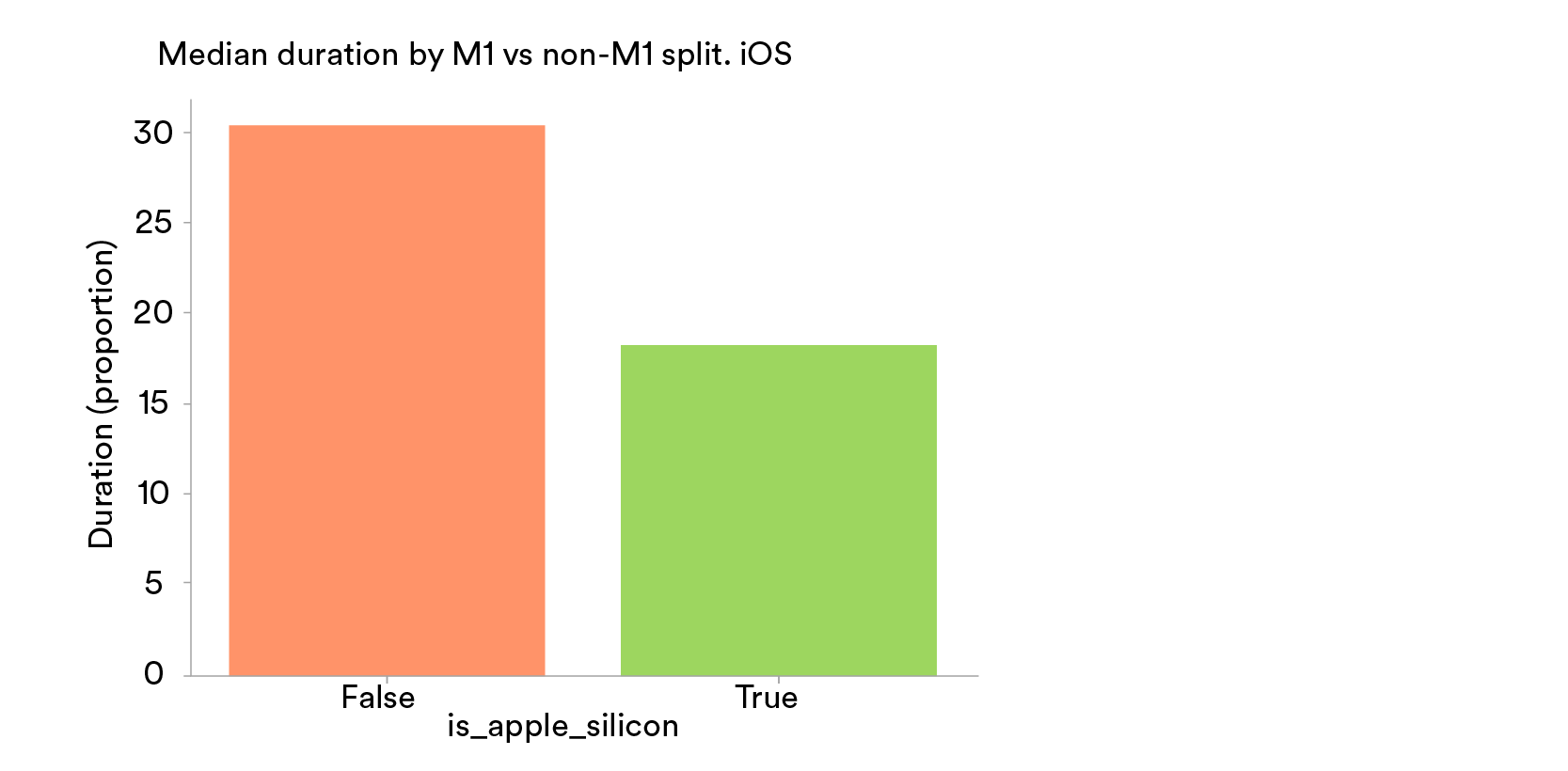

iOS: Apple silicon is about 40% faster.

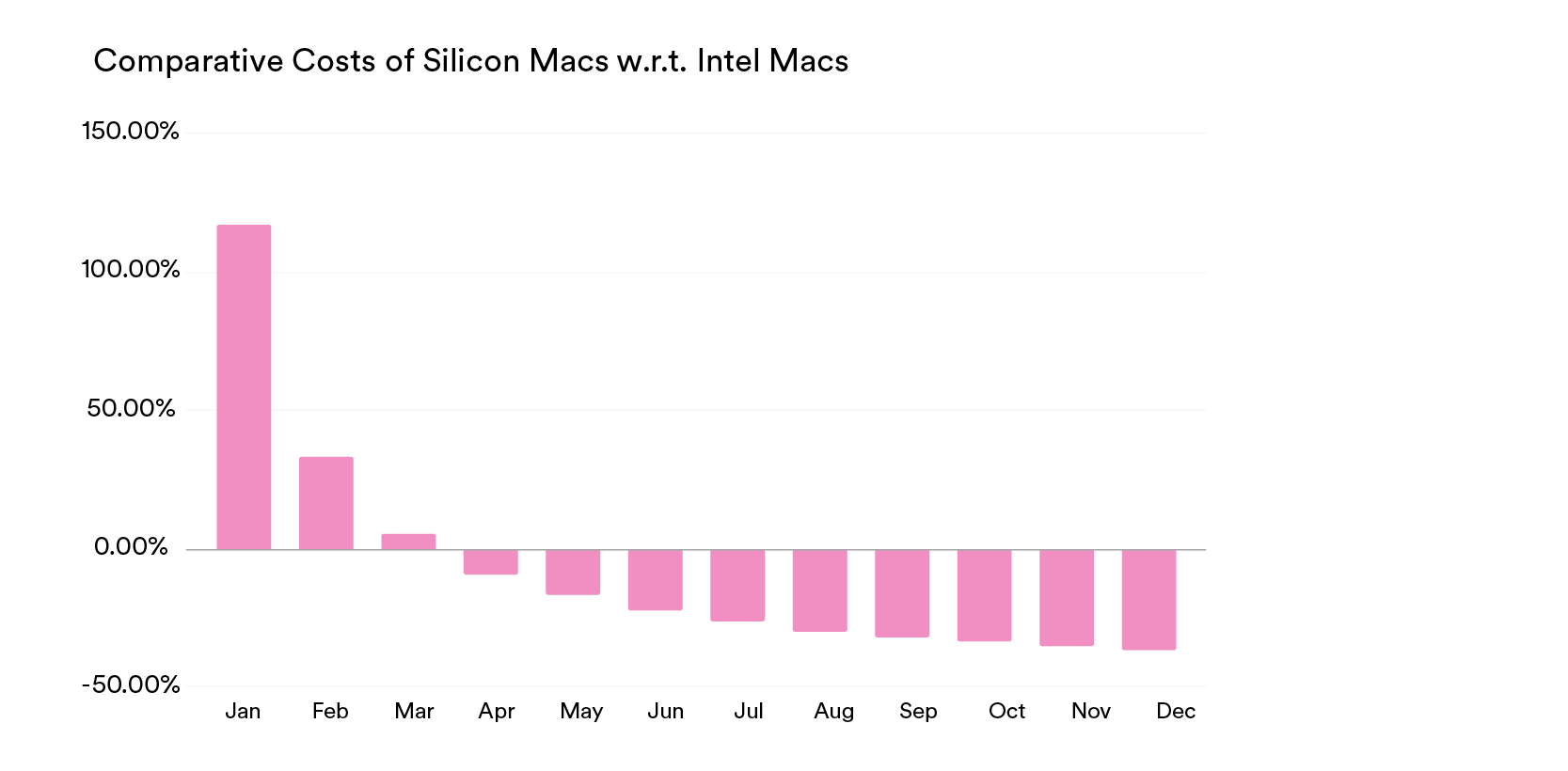

Are there any financial benefits in upgrading to M1s?

In our analysis, we found the upgrade to be cost-effective as well — we break even in about three months, and we could potentially save up to 36% in engineering costs per team.

Our recommendation

We had two questions to answer to be completely satisfied. Were the machines actually better in build time performance? And was the performance improvement worth the investment required to procure the machines?

Based on the empirical findings and our financial analysis, upgrading to Apple silicon machines definitely meets our criteria and is a viable solution to reduce developer build time woes.

How does one prioritise and scale in a distributed-first world?

To enable this upgrade, we needed to add support for the new machines to our development infrastructure. We set up a workstream dedicated to assist our developers while in the process of reaching full support. We then asked teams across the organisation to make sure dependencies and relevant tooling were accurately updated.

Given that these machines were proven to be better in terms of build performance, we recommended an expedited, out-of-the-normal-cycle machine upgrade for client developers. To do this, we needed to answer two questions: how should we prioritise within the client developer workforce? And how do we scale for a distributed-first workforce?

The prioritisation work was split into two streams: new (joiners) and current employees. For the first group, we collaborated with our colleagues in Tech Procurement and updated the available hardware in our “normal” tech cycle, making sure that new developers received the best setup from the start. For the second group, prioritisation was a bit more complicated. We decided to look at current hardware specifications, focusing on older models and those with lower RAM, as it was determined that both of these factors have significant impact on developer experience.

At Spotify, our distributed-first way of working allows us to have developers located around the world. And because of our commitment to reduce our carbon footprint, scaling this upgrade meant not only distributing the new machines, but also collecting the old ones to ensure they would be properly recycled. This was done in close collaboration with Tech Procurement and would not have been possible without the support from our client developer workforce.

This wasn’t a small undertaking. It involved shipping to hundreds of Spotifiers in 11 countries, with five different suppliers. Accounting for keyboard preferences (i.e., languages) meant that we had five different SKUs that faced various supply chain constraints, depending on where the machine was finally going to be shipped. We forged relationships with resellers and OEM manufacturers, who helped us to minimise delays getting M1s to our eager engineers.

Developer feedback, six months in

To measure the success of the upgrade, we surveyed 100 participating client developers within the organisation and leveraged the services of our internal user research experts to formulate questions that would help us capture the essence of the impact. The survey was structured around three pillars:

Development experience

Productivity perception

Task success

Developer experience

Almost all the surveyed developers (about 98.8%) rated their development experience to be 4 or 5, where 5 was the best experience score.

Productivity perception

Most of the surveyed developers (about 91.6%) rated their productivity perception to be 4 or 5, where 5 was the highest productivity rating.

Task success

About 83% of surveyed developers experienced a positive change (4 or 5) in task completion, where 5 was the best experience score.

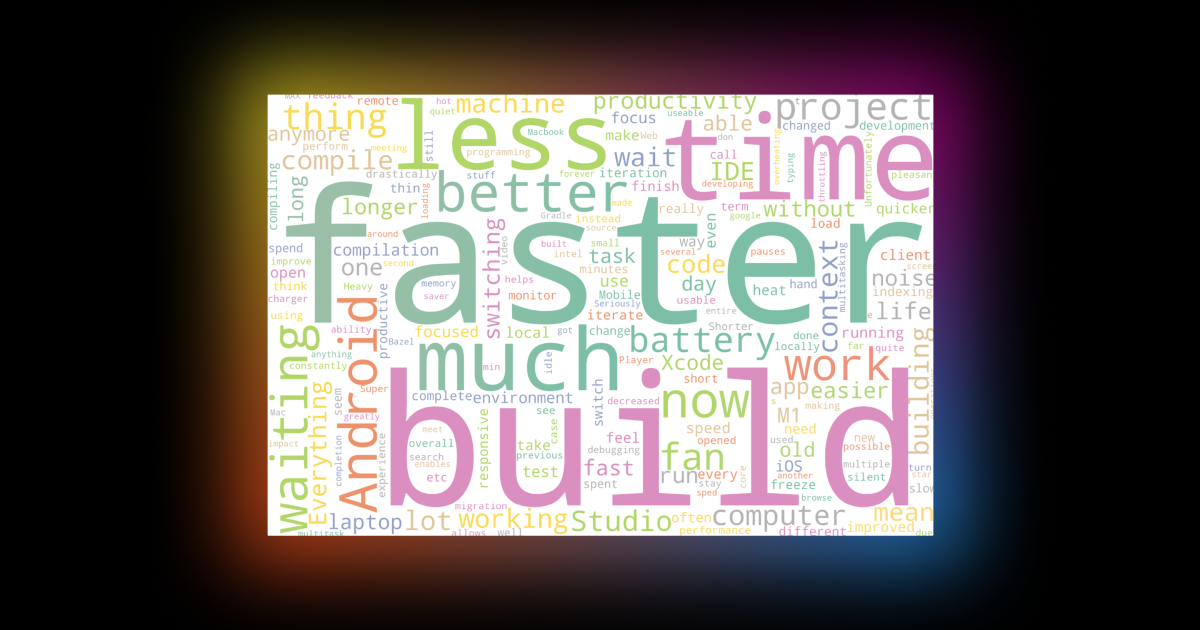

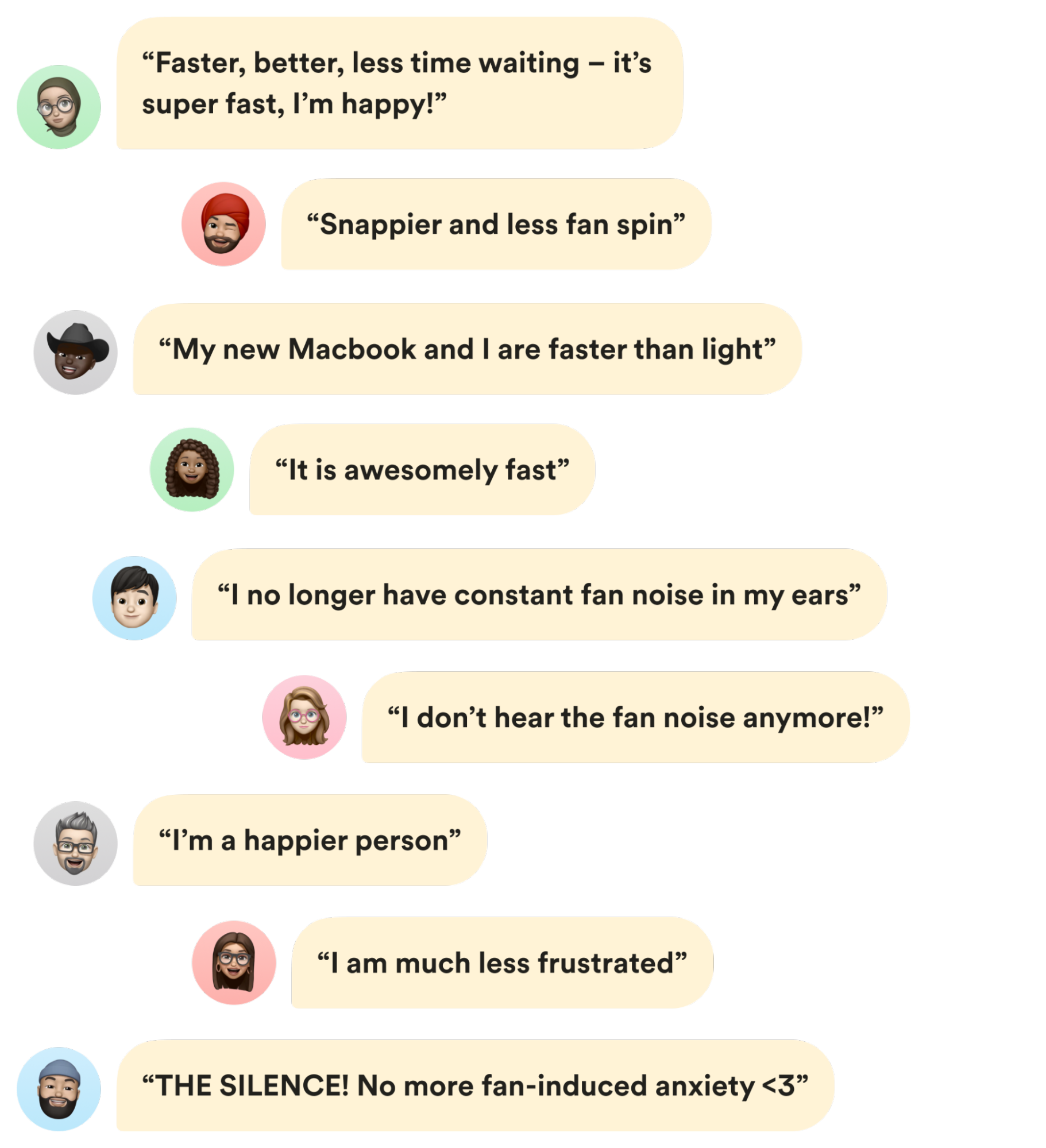

Free-text responses

We also analysed their free-text responses. Our developers shared that the builds ran much faster, required less wait time, and other positive experiences!

What we learned

We gave critical importance to an organised feedback loop and focussed on supporting and guiding our developers. While external factors caused long lead times from our vendors, resulting in multiple changes in estimated delivery times, we were able to overcome these challenges by maintaining a steady information flow, immediately updating our developers with new information, and quickly answering questions. Our efforts to communicate transparently and incorporate feedback were met with understanding and patience from the client workforce.

Sometimes we need to deviate from our standard best practices to make sure we continue to empower employees, enabling them to reach their full potential. Creating a positive developer experience remains our top priority, and the feedback we received from our survey shows that we are on the right track towards achieving our developer satisfaction goals.

Mac® is a trademark of Apple Inc.