Search Journey Towards Better Experimentation Practices

At Spotify, we aim to build and improve our product in a data-informed way. To do that, teams are encouraged to generate and test hypotheses by running experiments and gathering evidence for what works and what doesn’t.

In the Search team, in our journey towards this goal, we have learned that, besides having the ambition, we need at least two more things:

An experimentation platform that allows us to run experiments at scale and generate accurate results

A product development culture with evidence-based hypothesis testing at its core

Background

Over the last two years, Spotify has invested in building a new Experimentation Platform (see Spotify’s New Experimentation Platform (Part 1) and Spotify’s New Experimentation Platform (Part 2)) which, to a large extent, solves the first point. The experimentation platform is already mature and offers most of the functionality and flexibility required — and the pace at which the experimentation platform improves is often faster than the rate of adopting new functionality by individual teams. In addition, a lot of documentation and best practices have been established for the platform’s functionalities, which help with the process of setting up tests.

But the effort to adopt a truly data-informed product development process doesn’t stop there — it requires continuous effort and push.

Experimentation practices and data-driven product development is often considered a data science topic — and that is true to some extent. Data scientists usually have a deeper understanding of statistics and experimentation, so they tend to be the most excited about adopting a data-driven product development culture. The Search team at Spotify consists of several engineering teams, an array of product managers, a product insights team with a number of data scientists, and a product area leads group. You’d probably guess that engineering teams have to be pretty independent from data scientists to avoid creating bottlenecks, and you’d be right.

Defining best practices for experimentation is made relatively easily thanks to hundreds of articles that explain how to do things like perform a power analysis, choose metrics, interpret a p-value, etc. It is, however, less easy to integrate those practices day to day and turn them into habits. This requires creating an environment where adapting ways of working to improve our experimentation culture feels empowering, rather than uncomfortable, in a diverse team with different disciplines and varying levels of knowledge.

Read on to find out more about the two key drivers of our success and the concrete actions we took over the last year.

Key driver 1 – Roadmap

When it comes to adopting a truly data-informed product development process, the road to the end goal can feel overwhelming both for the engineering team and for data scientists. It is crucial that the plan towards that goal takes into account the maturity, ability, and bandwidth of the teams. In large organizations, these aspects often vary a lot from team to team and across parts of the organization. Too much too soon will lead to people feeling overwhelmed and giving up; too little too seldom and the momentum and excitement will be lost. To make this balancing easier, we created a roadmap with three simple steps:

Individual experiment quality — Adopt quality standards for each experiment to meet

Cross-experiments quality — Coordinate individual experiments

Measure total business impact — Estimate the cumulative impact of all the features released in a quarter

Step 1 — Individual experiment quality

For an experiment to bring the promised gold-standard value to a product decision, many things have to be in place: proper hypothesis, well-defined metrics to evaluate the hypothesis, decision rules set before the experiment starts, etc. These individual practices can be introduced to the teams gradually, making each one easy to integrate into the workflow. Combined, they raise each experiment to the gold-standard level. The main goal of Step 1 is to ensure that each experiment leads to a highly reliable data-informed product decision. Once the quality, and thereby the value, of each experiment starts to improve, the number of experiments will often also increase.

At Spotify Search, we continue to scale the speed of iteration in each team using experiments, and thus need to make each decision transparent and self-explanatory for anyone, e.g., new team members or those external to the team. For this reason, it is important to guide individual teams during Step 1 into using shared practices. Examples of such shared practices are using common conventions for naming experiments, writing hypotheses, and logging decisions after the experiment ends. We have applied this by having our Search data science team provide and advocate for simple templates for test specifications and experiment setups. These templates help remind each team member of important steps and considerations for each test while also streamlining the practices across teams.

Step 2 — Cross-experiments quality

Another important aspect of experimentation at Spotify is coordination. Most of the time, teams have to run several experiments on top of each other, and some of these experiments must be coordinated in the sense that the same user cannot be in two experiments at the same time. The Search team is no exception and coordinating experiments is a big part of our effort.

Spotify’s experimentation platform has flexible coordination capabilities, so coordinating experiments at scale is possible, but it requires cross-team collaboration and alignment. The Search data science team helps experimenting teams find the right setup for the right experiment, and provides guidelines to avoid running out of users that are available to try our new search features. This effort is again helped by the Step 1 alignment, where template experiments have pre-selected coordination behaviors that nudge team members to select the appropriate setup.

Step 3 — Measure total business impact

The final step of our plan to adopt a truly data-informed product development process is to measure the total impact of a product initiative over some relevant reporting time period, e.g., a quarter. The goal is to answer the question “What is the combined causal effect of all our product changes on our key metrics?” This can be done in several ways. A simple and naive total impact analysis is to sum the individual estimated causal effects from the experiments of all shipped product changes. At Spotify Search we use quarterly holdbacks to estimate the total impact of the product development program. This is achieved by holding a set of users back from all product changes during the quarter. At the end of the quarter, we run one experiment on the users in the holdback, where one group is given no product change (control group), and one group is given all the shipped product changes (treatment group). This yields an unbiased estimator of the total causal effect of all product changes and directly answers the question posed above.

Figure 1: During the quarter Figure 2: After the quarter

An important aspect of these steps is that they grow from within one team and spread across teams and within each individual experiment to across several experiments within the same initiative.

It is not uncommon that experimentation initiatives in larger organizations are driven by the middle-level decision makers’ need for better total business impact estimates to guide future priorities. However, it is important to acknowledge that measuring the impact of a full product initiative with decent precision is not a trivial thing to achieve. This is especially challenging when product evaluation is not already a natural part of the product development culture. Our belief and experience is that the quickest way to achieve proper overall product evaluation is to build from the bottom up with solid product evaluation practices in each team. Moreover, we have found that each of these steps has brought a lot of value to our product development cycle beyond measuring impact, e.g., product quality awareness, better practices around product evaluation in general, knowledge sharing, and alignment across product teams.

Key driver 2 – Constant injection of energy

Starting the fire

Building great experimentation practices is like starting a fire with wet wood. It won’t start just because you provide a spark, but if you give it care and provide it with the right resources, the fire will eventually start and burn by itself. It’s the same for experimentation practices. You will need to start with a source of energy, pulling and pushing in the right direction, and you will need to continuously inject energy, for a long time.

Adopting new practices that sometimes conflict with existing habits is a big challenge — it will take time and effort to convince teams of the value that experimentation brings to the product development process.

Our learnings reveal that at the beginning of the journey, a lot of things have to be boosted and done for the engineering teams rather than by them. By actually setting up and reviewing tests for the teams, it allows them to see for themselves that the amount of work required on a day-to-day basis is manageable and, most importantly, the value those efforts bring to the team and product. Doing certain tasks for the teams will also deepen the trust between the experimentation advocate and the teams, reassuring engineers that they will get support along the way.

In the early experimenting stage of any team, it is critical to accept that some tests will not be perfect and some product decisions, therefore, not fully data informed. However, it is of the utmost importance that the teams get sufficient support to succeed with an experiment by continuously injecting support and energy, starting the fire and keeping it burning. In our experience, the key to the success of starting experimentation is endurance and continuity. The smallest lighter can make any wet wood burn with sufficient time.

Maintaining the fire

Just as we had to scale our practices between teams, we had to scale the injection of energy. The solution to scale presented itself in the form of individuals across Search teams who developed an interest and expertise in experimentation and became new precious sources of energy. As we progressed as a team towards more data-driven product development, the expertise and support gradually transferred from the advocating team to individual team members who naturally started to inject energy.

In big and agile organizations, there are often organizational changes made to fit new needs, as well as new people joining, which makes it harder to keep practices aligned. So even if the fire burns by itself at some point, it is still crucial to have people dedicated to keep injecting energy, restarting and boosting the fire continuously.

Spotify Search in 2021

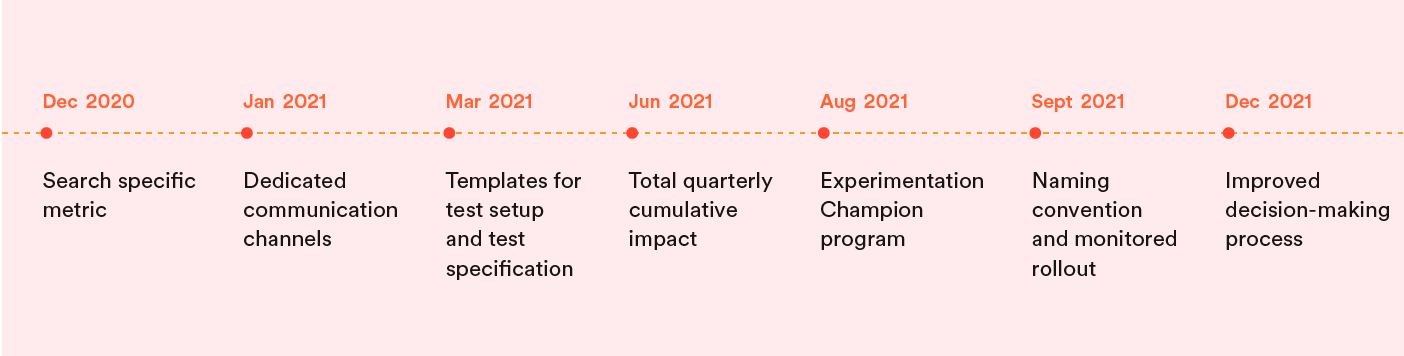

Now that we have covered the major steps and principles that led Spotify Search on the journey to data-driven product development, we will look in more detail at the specific actions that were taken in Search last year.

December 2020: Unlock the use of all features from our experimentation platform (like sample size calculation or result page) by integrating our Search-specific metric into the system.

January 2021: Creation of a dedicated weekly forum and communication channel to make information flow better between the search engineering teams and data scientists.

March 2021: Standardization of template for the test setup and test specification with pre-filled parameters. That unlocked the possibility for anyone to create and launch an experiment with basic search-specific parameters without prior knowledge.

June 2021: End-of-quarter cumulative experiment. At this point, most of the new features we developed for users were tested with an experiment, so we had all the ingredients to start measuring the cumulative impact of all the features we launch in Search over a quarter.

August 2021: Experimentation Champion program. Scale knowledge-sharing when new members join by establishing one person from each team as a dedicated experimentation champion. The role of the champion is to help spread knowledge between the Search data science team and their own.

September 2021: Naming convention for experiments. As part of our effort to scale and make each test more transparent, we created a naming convention to be able to determine from the name of the experiment which quarter and initiative this experiment was part of.

September 2021: The team committed to shipping all product changes gradually using monitoring. This was a great milestone for us! It showed that the whole team was feeling confident enough in our practices to commit to this objective.

October 2021: Decision-making process improvement. To ensure that we made good decisions for each experiment we ran, the experiment design needed to contain three elements: Hypothesis, Metrics, and Decision rules.

The key to the success of this roadmap was that the team only needed to focus on one small step at a time. Implementing these small steps over the course of just one year led to an impressive change in Search experimentation practices.

In 2022: keep the fire burning!

In 2021 we made great progress improving each experiment’s quality, improving coordination between experiments, and measuring global impact. This year in Search, we will take one more step toward truly building and improving our product in a data-informed way by integrating experimentation into a more global evaluation process for each initiative of our product development. If you’re interested in joining the team, take a look at our open jobs!

Conclusion

Approaching this problem, I thought that changing product development culture could only come from a change in expectations and incentives from leaders higher up in the organization. However, during the last year, I have seen many people from several disciplines stepping in and taking responsibility for the progress of our experimentation practices. It is now common to see engineers discussing if they should stop a product change from being launched to prevent a negative user experience. We have PMs on our team driving the education of PMs at Spotify globally on the topic of experimentation.

To me this evolution of behavior is proof that it is possible to improve our product evaluation process substantially by taking three simple measures: providing a safe environment for teams to evolve their practices, continuously injecting energy and support, and ensuring that the teams can see, with their own eyes, the benefits of the changes they’ve made.