It’s All Just Wiggly Air: Building Infrastructure to Support Audio Research

TL;DR We just open sourced Klio — our framework for building smarter data pipelines for audio and other media processing. Based on Python and Apache Beam, Klio helps our teams process Spotify’s massive catalog of music and podcasts, faster and more efficiently. We think Klio’s ease of use — and its ability to let anyone leverage modern cloud infrastructure and tooling — has the potential to unlock new possibilities in media and ML research everywhere, from big tech companies to universities and libraries.

But now we’re getting ahead of ourselves. What exactly is Klio and what does it do? Let’s start with the problem of audio itself.

Audio is hard

Really, sound is just wiggly air. At a basic level, every violin concerto, love song, dog bark, and knock-knock joke is the result of air compressing and vibrating, which we sense as it moves bones and hair in our ears. Sound is an invisible force that reaches us in ways that we can’t see, but can feel. And that’s what also makes audio so difficult for machines to parse: Humans can tell the difference between a swooning vocal, a danceable beat, and a buzzing bee. Can we teach machines to hear those differences, too?

Machine listening, the field of research focused on getting computers to understand audio, combines expertise and methods from signal processing, music information retrieval, and machine learning — so that all those vibrations in the air result in data that makes a bit more sense for an engineer to work with. When encoded, compressed, and stored on a computer, you’re left with ones and zeroes packed into relatively large binary files. At a glance, a guitar solo can look just like a yodel. So, how do we begin to make sense of it all? And at scale?

One problem multiplied 60 million times

Processing massive amounts of large binary files: It was a problem that was only getting bigger at Spotify. We’re adding about 40,000 songs a day and are processing our music catalog — about 60 million songs — on a regular basis, with multiple teams around the world doing work at the same time. Besides the problem of engineering that kind of scale and parallelization, we also wanted a way to tie the processing jobs more closely with the work our audio and ML research teams were doing.

We were already building sophisticated data pipelines that supported AI and ML jobs using Scio, a precursor to Klio. Scio proved to be a flexible, scalable framework that any team could use to build smarter data pipelines at scale. By tying together large database queries, map-filter-reduce operations, natural language processing, and ML models, teams could create better, more personalized playlists, like Discover Weekly, Release Radar, and dozens of others.

So, Scio created a platform for processing massive amounts of data about the audio. But what about processing the audio itself?

A uniquely Spotify problem, a uniquely Spotify solution

While processing metadata for the libraries of 299+ million users is impressive, it’s not the same as processing the content itself — those tens of millions of binary audio files that Spotify hosts and serves all over the world. On top of that, Java-based languages weren’t interfacing well with our Python-based research tools for audio and ML.

We knew that if we could build data pipelines that supported large-scale audio processing, there were untold features and personalizations waiting to be unlocked. We just needed a framework that supported it — and that worked as well with our research tools as our engineering tools.

In 2019, an ad hoc team of data engineers, ML researchers, and audio experts outlined the requirements for creating a framework designed especially for processing media. Scio was a model of success, but still just a starting point. This new framework would need to support:

- Large-file input/output: We wanted to transform audio, videos, images — all kinds of heavy-duty binary media files — in dozens of ways, with both streaming and batch processing.

- Scalability, reproducibility, efficiency: When you’re working with a dataset as large as the world’s music, as well as a burgeoning ecosystem of podcasts, you don’t want to have to redo your work over and over again.

- Closer collaboration between researchers and engineers: This translated into support for both Python (the lingua franca of both audio processing and ML) as well as non-Python dependencies (e.g., libsndfile, ffmpeg, etc.).

In short, we needed a framework that could production-ize audio processing. This wasn’t just about creating data pipelines for media. It was about doing it at Spotify scale and with support for the latest audio and ML research. Let’s dig into that last requirement first.

Researchers, engineers, and Python: The importance of speaking a common language

Around this time, we noticed that both our researchers and engineers were beginning to get a little tired of the roadblocks preventing their audio work from getting adopted. Audio researchers were making promising breakthroughs, but the cost of getting new approaches integrated into shipping products was becoming increasingly high.

As much as their counterparts in data and ML engineering wanted to help, those engineers were spending much of their time looking after several distinct, bespoke systems for production audio processing, all built and customized for individual teams. In other words, we had smart people all over the company working on audio, but our world-class researchers and engineers couldn’t work together, until most of the research was rewritten by the engineers. And even then, all that work and effort was siloed.

The solution was simple: Python. It’s the native language of research and well-suited for the engineering problems at hand. Most importantly, allowing everyone to speak without a translation layer puts everyone in a position to focus on what they excel at. Audio and ML researchers get to focus on experimentation and building cutting-edge research tools. Engineers get to focus on building clean, reliable code.

What is Klio?

Klio is a framework for building smarter data pipelines for audio and other binary files, enabling you to production-ize media processing at scale.

- Streamlined Apache Beam for a more ergonomic, Python-native experience for researchers and engineers

- Open graph of job dependencies with support for top-down and bottom-up executions

- Integration with cloud processing engines for managed resources and autoscaling production pipelines

- Containerization of custom dependencies for simplified development and easily reproducible deployment

- Batch and streaming pipelines for continuous processing

Apache Beam under the hood, Klio in the driver’s seat

It’s no surprise then that Klio is built on top of Apache Beam for Python, while also aiming to be a more Pythonic experience of Beam. Additionally, Klio offers several advantages over traditional Python Beam for media processing — providing a substantial reduction in boilerplate code (an average of 60%), a focus on heavy file I/O, and standards for connecting multiple streaming jobs together in a jobs dependency graph (with top-down and bottom-up execution). This allows teams to immediately focus on writing new pipelines, with the knowledge that they can easily be extended and connected later.

This ease of use and streamlining of Apache Beam means we can get our state-of-the-art audio research into people’s hands and ears, faster. And while Klio offers this more opinionated way to use Apache Beam for common media processing use cases by default, it also allows the use of core Python Beam at any time if Klio’s opinions don’t fit your use case.

Efficiency, efficiency, efficiency (DRY: Don’t Repeat Yourself)

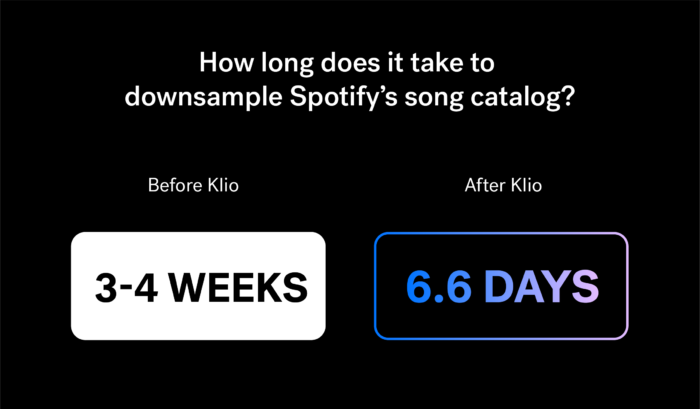

When we were developing Klio, we decided to test it by downsampling every track in Spotify’s 60-million song catalog — amounting to well over 100 million audio files in all (including multiple releases of the same song). Downsampling is often the first step of audio analysis, so it’s a great benchmark of what real-world performance might look like. Previously, the fastest we had accomplished this at Spotify was about three or four weeks. With Klio, we did it in six days, and reduced costs by four times. When you think about the number of songs in our catalog, and our quickly growing podcast library, Klio can have a tremendous impact on our teams and our business.

You’ll find these kinds of optimizations throughout Klio’s implementation. Klio pipelines improve processing time and costs by avoiding duplicate work on already processed audio. And the framework is opinionated — encouraging engineers and researchers to write a pipeline focused on one thing, like finding the timestamps of all the beats to a song or measuring a song’s loudness. By creating reusable building blocks, Klio allows for researchers to build more easily on top of previous research and create graphs of pipelines, leading to features like infinite playlists optimized for your current mood, internal tools that help automate the review of new content, and powerful data that personalizes the Spotify experience for each user.

Scale, reproducibility, and clouds. No infra team required.

Klio can be run locally, but it really shines in the cloud — and is ready-made for it. In order to achieve the large-scale processing and reproducibility that we require at Spotify, Klio leverages the best parts of modern cloud infrastructures (like managed resources to autoscale production pipelines) and tooling (like containerization for easier deployments).

Klio was designed to be cloud agnostic, and the underlying Apache Beam project is designed to run workloads across any data workflow engine. Right now, it’s configured to work with Google Cloud Platform, but we welcome contributions to help get Klio running on AWS, Azure, or another infrastructure.

One thing to note: Current limitations to Beam Python prevent all of its features from being used on every engine, but we expect increased compatibility with Apache Flink and Apache Spark as Apache Beam extends its underlying compatibility with these engines. Preliminary work has also been done testing Klio on Amazon AWS and S3 using Klio’s Direct Runner.

We think this cloud integration (infrastructure as a service) can unlock production bottlenecks, as well as encourage experimentation. Engineering teams can rely on Klio to standardize media processing — using data processing and monitoring tools they’re already familiar with — rather than creating architectures from the ground up. Klio’s ability to autoscale production pipelines to handle variable workloads lets engineers focus on the next thing, rather than constantly tuning workloads.

From Sing Along to dolphin songs: Open and the great unknown

Klio began as a proof of concept a little less than two years ago. It was invented out of necessity — to overcome challenges we were facing internally. But even from the very beginning, it was built with the intention of being free and open source software.

As we’ve seen with Backstage, our open platform for building developer portals, Spotify is committed to open source and developer experience. We want to make the lives of engineers easier, so they can focus on building amazing things. So we’re excited to see not only how Klio can help others and advance audio/media research, but also what we can learn from others’ contributions and how Klio can evolve as a result.

Before and after Klio, Spotify has been doing this kind of large-scale audio analysis for nearly a decade, extracting and transforming tracks in our catalog on a weekly, daily, and streaming basis. Audio analysis algorithms power our Audio Features API for fingerprinting songs by their unique attributes (illustrated in this interactive New York Times article), in-house tools, like our automated content review screener; and market-specific features, like our Sing Along feature in Japan — which separates the vocals from the instruments as songs are uploaded to the catalog to create interactive versions that people can sing along with.

But as we saw when we open sourced Backstage, the open source community will come up with use cases we never dreamed of. And since Klio enables anyone to do this kind of heavy-duty media processing at scale (not just big tech companies), we’re particularly curious to see what academics and research institutions will build with it. (Dolphin speech, anyone?)

So, thank you to the Klio team and to everyone who’s ever used Klio or contributed to its development over the years (including its sibling framework, Scio). And thank you to all those reading this right now and who will contribute to its development in the future. It’s a product that only Spotify could have built. But we’re even more proud now that it’s out there for the world to share. Now let’s get started.

Tags: machine learning